Sections

- Just Make Great Content…

- Search Engine Engineering Fear

- Ignore The Eye Candy, It’s Poisoned

- Below The Fold = Out Of Mind

- Coercion Which Failed

- Embrace, Extend, Extinguish

- Dumb Pipes, Dumb Partnerships

- „User“ Friendly

- The Numbers Can’t Work

- Mobile Search Index

- Tracking Users

Just Make Great Content…

Remember the whole shtick about good, legitimate, high-quality content being created for readers without concern for search engines – even as though search engines do not exist?

Whatever happened to that?

We quickly shifted from the above „ideology“ to this:

The red triangle/exclamation point icon was arrived at after the Chrome team commissioned research around the world to figure out which symbols alarmed users the most.

Search Engine Engineering Fear

Google is explicitly spreading the message that they are doing testing on how to create maximum fear to try to manipulate & coerce the ecosystem to suit their needs & wants.

At the same time, the Google AMP project is being used as the foundation of effective phishing campaigns.

Scare users off of using HTTP sites AND host phishing campaigns.

Killer job Google.

Someone deserves a raise & some stock options. Unfortunately that person is in the PR team, not the product team.

Ignore The Eye Candy, It’s Poisoned

I’d like to tell you that I was preparing the launch of https://amp.secured.mobile.seobook.com but awareness of past ecosystem shifts makes me unwilling to make that move.

I see it as arbitrary hoop jumping not worth the pain.

If you are an undifferentiated publisher without much in the way of original thought, then jumping through the hoops make sense. But if you deeply care about a topic and put a lot of effort into knowing it well, there’s no reason to do the arbitrary hoop jumping.

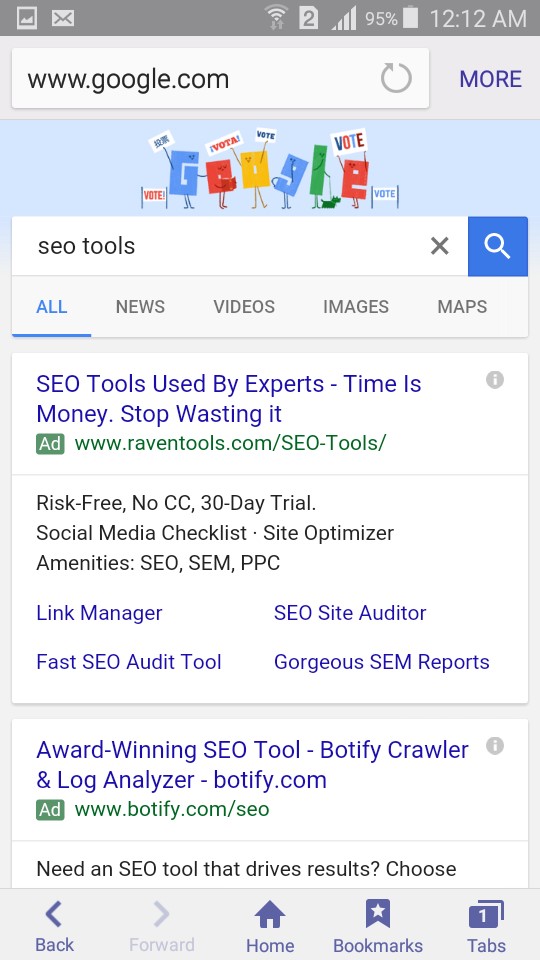

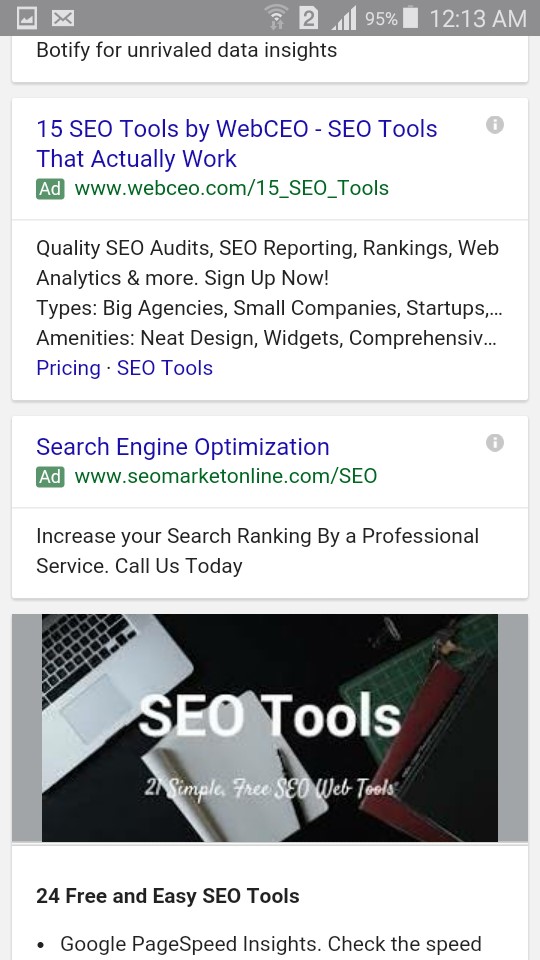

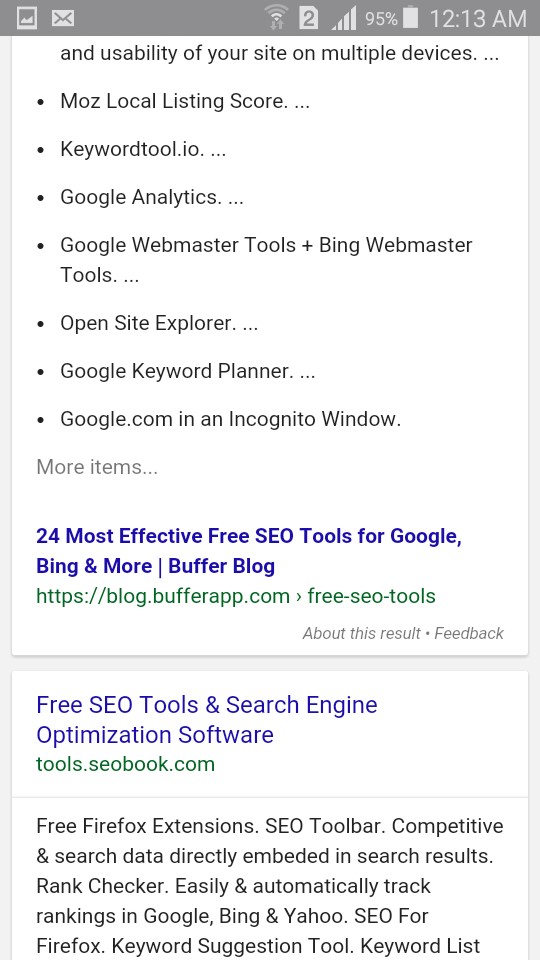

Remember how mobilegeddon was going to be the biggest thing ever? Well I never updated our site layout here & we still outrank a company which raised & spent 10s of millions of dollars for core industry terms like [seo tools].

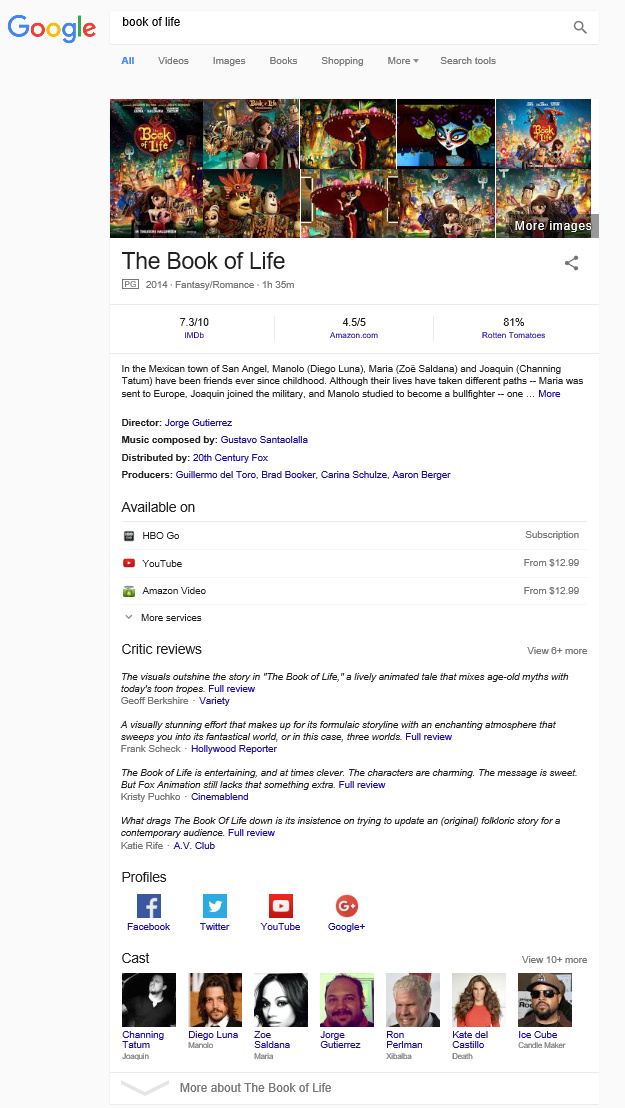

Though it is also worth noting that after factoring in increased ad load with small screen sizes & the scrape graph featured answer stuff, a #1 ranking no longer gets it done, as we are well below the fold on mobile.

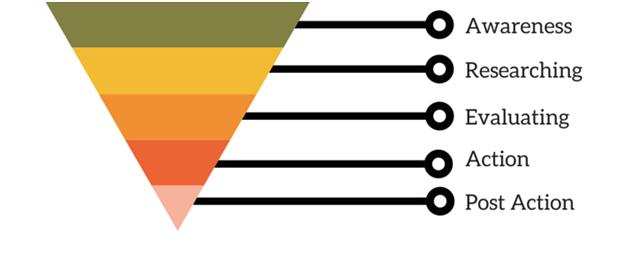

Below the Fold = Out of Mind

In the above example I am not complaining about ranking #5 and wishing I ranked #2, but rather stating that ranking #1 organically has little to no actual value when it is a couple screens down the page.

Google indicated their interstitial penalty might apply to pop ups that appear on scroll, yet Google welcomes itself to installing a toxic enhanced version of the Diggbar at the top of AMP pages, which persistently eats 15% of the screen & can’t be dismissed. An attempt to dismiss the bar leads the person back to Google to click on another listing other than your site.

As bad as I may have made mobile search results appear earlier, I was perhaps being a little too kind. Google doesn’t even have mass adoption of AMP yet & they already have 4 AdWords ads in their mobile search results AND when you scroll down the page they are testing an ugly „back to top“ button which outright blocks a user’s view of the organic search results.

What happens when Google suggests what people should read next as an overlay on your content & sells that as an ad unit where if you’re lucky you get a tiny taste of the revenues?

Is it worth doing anything that makes your desktop website worse in an attempt to try to rank a little higher on mobile devices?

Given the small screen size of phones & the heavy ad load, the answer is no.

I realize that optimizing a site design for mobile or desktop is not mutually exclusive. But it is an issue we will revisit later on in this post.

Coercion Which Failed

Many people new to SEO likely don’t remember the importance of using Google Checkout integration to lower AdWords ad pricing.

You either supported Google Checkout & got about a 10% CTR lift (& thus 10% reduction in click cost) or you failed to adopt it and got priced out of the market on the margin difference.

And if you chose to adopt it, the bad news was you were then spending yet again to undo it when the service was no longer worth running for Google.

How about when Google first started hyping HTTPS & publishers using AdSense saw their ad revenue crash because the ads were no longer anywhere near as relevant.

Oops.

Not like Google cared much, as it is their goal to shift as much of the ad spend as they can onto Google.com & YouTube.

Google is now testing product ads on YouTube.

It is not an accident that Google funds an ad blocker which allows ads to stream through on Google.com while leaving ads blocked across the rest of the web.

Android Pay might be worth integrating. But then it also might go away.

It could be like Google’s authorship. Hugely important & yet utterly trivial.

Faces help people trust the content.

Then they are distracting visual clutter that need expunged.

Then they once again re-appear but ONLY on the Google Home Service ad units.

They were once again good for users!!!

Neat how that works.

Embrace, Extend, Extinguish

Or it could be like Google Reader. A free service which defunded all competing products & then was shut down because it didn’t have a legitimate business model due to it being built explicitly to prevent competition. With the death of Google reader many blogs also slid into irrelevancy.

Their FeedBurner acquisition was icing on the cake.

Techdirt is known for generally being pro-Google & they recently summed up FeedBurner nicely:

Thanks, Google, For Fucking Over A Bunch Of Media Websites – Mike Masnick

Ultimately Google is a horrible business partner.

And they are an even worse one if there is no formal contract.

Dumb Pipes, Dumb Partnerships

They tried their best to force broadband providers to be dumb pipes. At the same time they promoted regulation which will prevent broadband providers from tracking their own users the way that Google does, all the while broadening out Google’s privacy policy to allow personally identifiable web tracking across their network. Once Google knew they would retain an indefinite tracking advantage over broadband providers they were free to rescind their (heavily marketed) free tier of Google Fiber & they halted the Google Fiber build out.

When Google routinely acts so anti-competitive & abusive it is no surprise that some of the „standards“ they propose go nowhere.

You can only get screwed so many times before you adopt a spirit of ambivalence to the avarice.

Google is the type of „partner“ that conducts security opposition research on their leading distribution partner, while conveniently ignoring nearly a billion OTHER Android phones with existing security issues that Google can’t be bothered with patching.

Deliberately screwing direct business partners is far worse than coding algorithms which belligerently penalize some competing services all the while ignoring that the payday loan shop funded by Google leverages doorway pages.

„User“ Friendly

BackChannel recently published an article foaming at the mouth promoting the excitement of Google’s AI:

This 2016-to-2017 Transition is going to move us from systems that are explicitly taught to ones that implicitly learn.“ … the engineers might make up a rule to test against—for instance, that “usual” might mean a place within a 10-minute drive that you visited three times in the last six months. “It almost doesn’t matter what it is — just make up some rule,” says Huffman. “The machine learning starts after that.

The part of the article I found most interesting was the following bit:

After three years, Google had a sufficient supply of phonemes that it could begin doing things like voice dictation. So it discontinued the [phone information] service.

Google launches „free“ services with an ulterior data motive & then when it suits their needs, they’ll shut it off and leave users in the cold.

As Google keeps advancing their AI, what do you think happens to your AMP content they are hosting? How much do they squeeze down on your payout percentage on those pages? How long until the AI is used to recap / rewrite content? What ad revenue do you get when Google offers voice answers pulled from your content but sends you no visitor?

The Numbers Can’t Work

A recent Wall Street Journal article highlighting the fast ad revenue growth at Google & Facebook also mentioned how the broader online advertising ecosystem was doing:

Facebook and Google together garnered 68% of spending on U.S. online advertising in the second quarter—accounting for all the growth, Mr. Wieser said. When excluding those two companies, revenue generated by other players in the U.S. digital ad market shrank 5%

The issue is NOT that online advertising has stalled, but rather that Google & Facebook have choked off their partners from tasting any of the revenue growth. This problem will only get worse as mobile grows to a larger share of total online advertising:

By 2018, nearly three-quarters of Google’s net ad revenues worldwide will come from mobile internet ad placements. – eMarketer

Media companies keep trusting these platforms with greater influence over their business & these platforms keep screwing those same businesses repeatedly.

You pay to get likes, but that is no longer enough as edgerank declines. Thanks for adopting Instant Articles, but users would rather see live videos & read posts from their friends. You are welcome to pay once again to advertise to the following you already built. The bigger your audience, the more we will charge you! Oh, and your direct competitors can use people liking your business as an ad targeting group.

Worse yet, Facebook & Google are even partnering on core Internet infrastructure.

In his interview with Obama tonight, @billmaher suggested the news business should be not-for-profit. Mission accomplished, thank Facebook.— Downtown Josh Brown (@ReformedBroker) November 5, 2016

Any hope of AMP turning the corner on the revenue front is a „no go“:

“We want to drive the ecosystem forward, but obviously these things don’t happen overnight,” Mr. Gingras said. “The objective of AMP is to have it drive more revenue for publishers than non-AMP pages. We’re not there yet”.

Publishers who are critical of AMP were reluctant to speak publicly about their frustrations, or to remove their AMP content. One executive said he would not comment on the record for fear that Google might “turn some knob that hurts the company.”

Look at that.

Leadership through fear once again.

At least they are consistent.

As more publishers adopt AMP, each publisher in the program will get a smaller share of the overall pie.

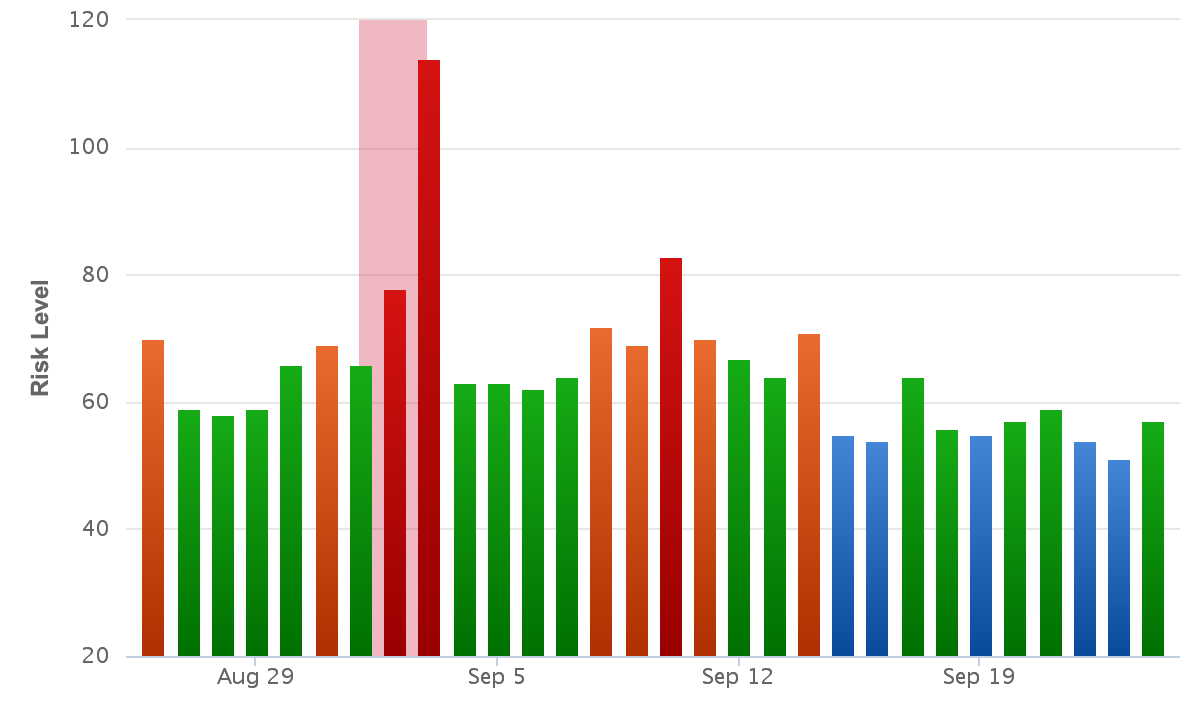

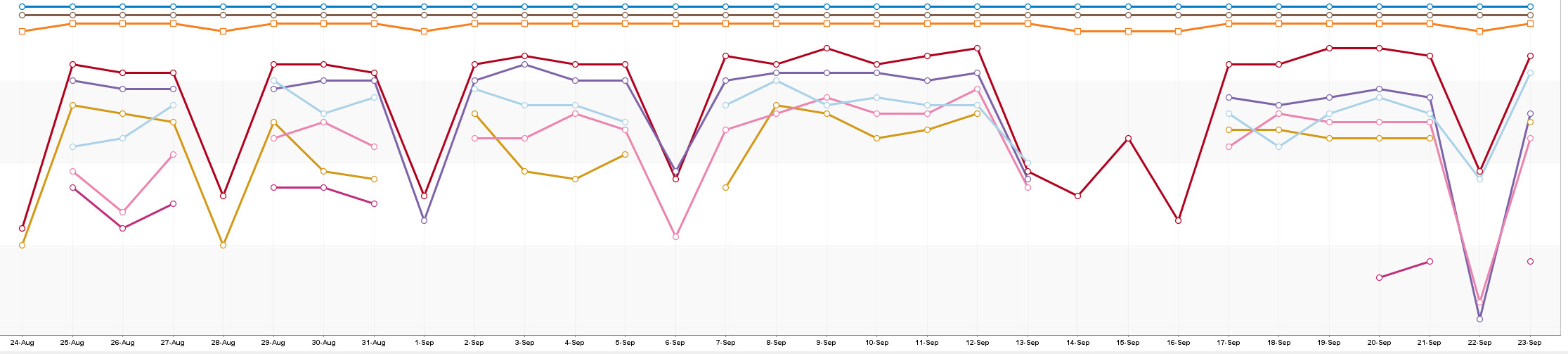

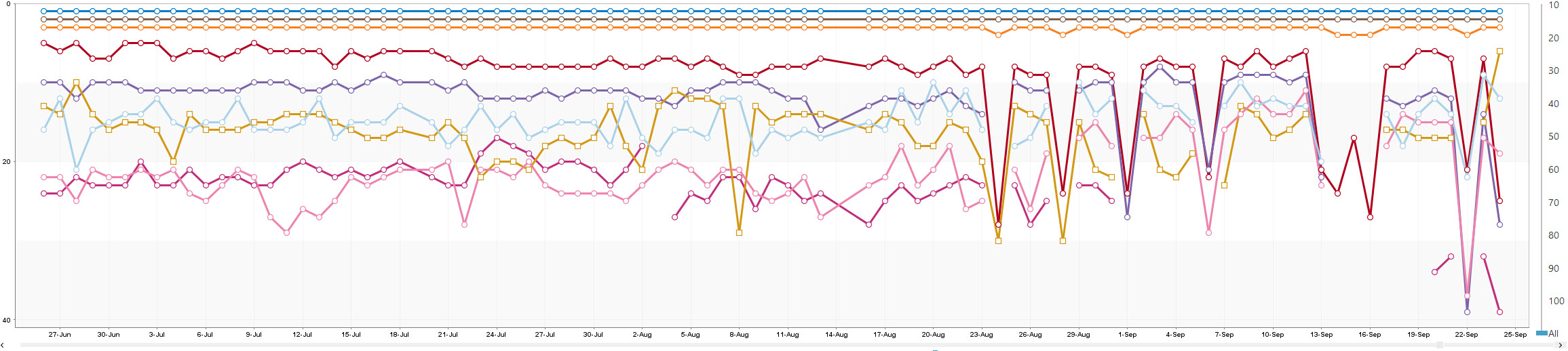

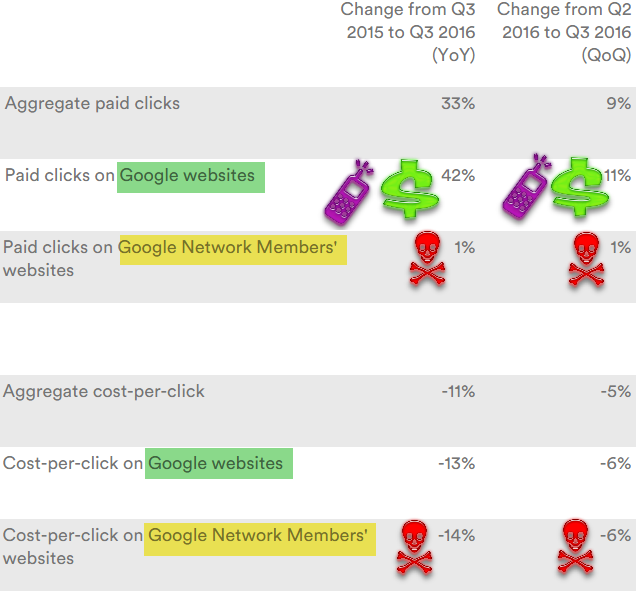

Just look at Google’s quarterly results for their current partners. They keep showing Google growing their ad clicks at 20% to 40% while partners oscillate between -15% and +5% quarter after quarter, year after year.

In the past quarter Google grew their ad clicks 42% YoY by pushing a bunch of YouTube auto play video ads, faster search growth in third world markets with cheaper ad prices, driving a bunch of lower quality mobile search ad clicks (with 3 then 4 ads on mobile) & increasing the percent of ad clicks on „own brand“ terms (while sending the FTC after anyone who agrees to not cross bid on competitor’s brands).

The lower quality video ads & mobile ads in turn drove their average CPC on their sites down 13% YoY.

The partner network is relatively squeezed out on mobile, which makes it shocking to see the partner CPC off more than core Google, with a 14% YoY decline.

What ends up happening is eventually the media outlets get sufficiently defunded to where they are sold for a song to a tech company or an executive at a tech company. Alibaba buying SCMP is akin to Jeff Bezos buying The Washington Post.

The Wall Street Journal recently laid off reporters. The New York Times announced they were cutting back local cultural & crime coverage.

If news organizations of that caliber can’t get the numbers to work then the system has failed.

The Guardian is literally incinerating over 5 million pounds per month. ABC is staging fake crime scenes (that’s one way to get an exclusive).

The Tribune Company, already through bankruptcy & perhaps the dumbest of the lot, plans to publish thousands of AI assisted auto-play videos in their articles every day. That will guarantee their user experience on their owned & operated sites is worse than just about anywhere else their content gets distributed to, which in turn means they are not only competing against themselves but they are making their own site absolutely redundant & a chore to use.

That the Denver Guardian (an utterly fake paper running fearmongering false stories) goes viral is just icing on the cake.

Look at this brazen, amazing garbage. Facebook has become the world’s leading distributor of lies.https://t.co/oueWUiydJO— Matt Pearce (@mattdpearce) November 6, 2016

many Facebook users wish to connect with people and things that confirm their pre-existing opinions, whether or not they are true. … Giving people what they want to see will always draw more attention than making them work for it, in rather the same way that making up news is cheaper and more profitable than actually reporting the truth. – Ben Thompson

These tech companies are literally reshaping society & are sucking the life out of the economy, destroying adjacent markets & bulldozing regulatory concerns, all while offloading costs onto everyone else around them.

The crumbling of the American dream is a purple problem, obscured by solely red or solely blue lenses. Its economic and cultural roots are entangled, a mixture of government, private sector, community and personal failings. But the deepest root is our radically shriveled sense of “we.” … Until we treat the millions of kids across America as our own kids, we will pay a major economic price, and talk of the American dream will increasingly seem cynical historical fiction.

And the solution to killing the middle class, is, of course, to kill the middle class:

„We are going to raise taxes on the middle class“ -Hillary Clinton #NeverHilla… (Vine by @USAforTrump2016) https://t.co/veEiZnfbkH— JKO (@jko417) November 6, 2016

An FTC report recommended suing Google for their anti-competitive practices, but no suit was brought. The US Copyright Office Register was relieved of her job after she went against Google’s views on set top boxes. Years ago many people saw where this was headed:

„This is a major affront to copyright,“ said songwriter and music publisher Dean Kay. „Google seems to be taking over the world – and politics … Their major position is to allow themselves to use copyright material without remuneration. If the Copyright Office head is towing the Google line, creators are going to get hurt.“

…

Singer Don Henley said Pallante’s ouster was „an enormous blow“ to artists. „She was a champion of copyright and stood up for the creative community, which is one of the things that got her fired,“ he said. … [Pallante’s replacement] Hayden „has a long track record of being an activist librarian who is anti-copyright and a librarian who worked at places funded by Google.“

And in spite of the growing importance of tech media coverage of the industry is a trainwreck:

This is what it’s like to be a technology reporter in 2016. Freebies are everywhere, but real access is scant. Powerful companies like Facebook and Google are major distributors of journalistic work, meaning newsrooms increasingly rely on tech giants to reach readers, a relationship that’s awkward at best and potentially disastrous at worst.

Being a conduit breeds exclusives. Challenging the grand narrative gets one blackballed.

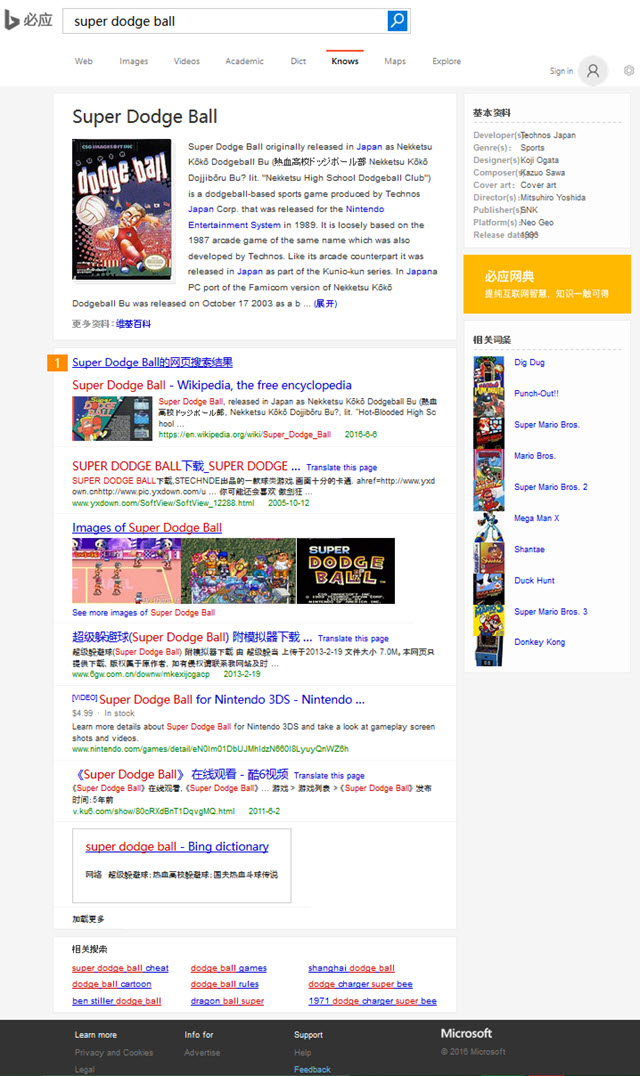

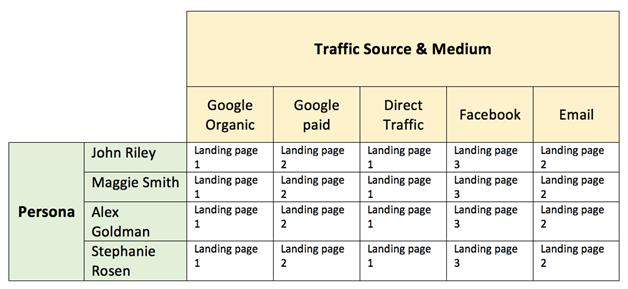

Mobile Search Index

Google announced they are releasing a mobile first search index:

Although our search index will continue to be a single index of websites and apps, our algorithms will eventually primarily use the mobile version of a site’s content to rank pages from that site, to understand structured data, and to show snippets from those pages in our results. Of course, while our index will be built from mobile documents, we’re going to continue to build a great search experience for all users, whether they come from mobile or desktop devices.

There are some forms of content that simply don’t work well on a 350 pixel wide screen, unless they use a pinch to zoom format. But using that format is seen as not being mobile friendly.

Imagine you have an auto part database which lists alternate part numbers, price, stock status, nearest store with part in stock, time to delivery, etc. … it is exceptionally hard to get that information to look good on a mobile device. And good luck if you want to add sorting features on such a table.

The theory that using the desktop version of a page to rank mobile results is flawed because users might find something which is only available on the desktop version of a site is a valid point. BUT, at the same time, a publisher may need to simplify the mobile site & hide data to improve usability on small screens & then only allow certain data to become visible through user interactions. Not showing those automotive part databases to desktop users would ultimately make desktop search results worse for users by leaving huge gaps in the search results. And a search engine choosing to not index the desktop version of a site because there is a mobile version is equally short sighted. Desktop users would no longer be able to find & compare information from those automotive parts databases.

Once again money drives search „relevancy“ signals.

Since Google will soon make 3/4 of their ad revenues on mobile that should be the primary view of the web for everyone else & alternate versions of sites which are not mobile friendly should be disappeared from the search index if a crappier lite mobile-friendly version of the page is available.

Amazon converts well on mobile in part because people already trust Amazon & already have an account registered with them. Most other merchants won’t be able to convert at anywhere near as well of a rate on mobile as they do on desktop, so if you have to choose between having a mobile friendly version that leaves differentiated aspects hidden or a destkop friendly version that is differentiated & establishes a relationship with the consumer, the deeper & more engaging desktop version is the way to go.

The heavy ad load on mobile search results only further combine with the low conversion rates on mobile to make building a relationship on desktop that much more important.

Even TripAdvisor is struggling to monetize mobile traffic, monetizing it at only about 30% to 33% the rate they monetize desktop & tablet traffic. Google already owns most the profits from that market.

Webmasters are better off NOT going mobile friendly than going mobile friendly in a way that compromises the ability of their desktop site.

Mobile-first: with ONLY a desktop site you’ll still be in the results & be findable. Recall how mobilegeddon didn’t send anyone to oblivion?— Gary Illyes (@methode) November 6, 2016

I am not the only one suggesting an over-simplified mobile design that carries over to a desktop site is a losing proposition. Consider Nielsen Norman Group’s take:

in the current world of responsive design, we’ve seen a trend towards insufficient information density and simplifying sites so that they work well on small screens but suboptimally on big screens.

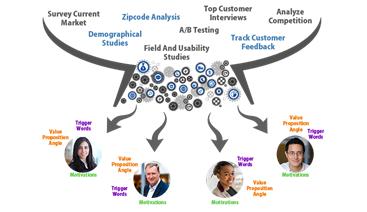

Tracking Users

Publishers are getting squeezed to subsidize the primary web ad networks. But the narrative is that as cross-device tracking improves some of those benefits will eventually spill back out into the partner network.

I am rather skeptical of that theory.

Facebook already makes 84% of their ad revenue from mobile devices where they have great user data.

They are paying to bring new types of content onto their platform, but they are only just now beginning to get around to test pricing their Audience Network traffic based on quality.

Priorities are based on business goals and objectives.

Both Google & Facebook paid fines & faced public backlash for how they track users. Those tracking programs were considered high priority.

When these ad networks are strong & growing quickly they may be able to take a stand, but when growth slows the stock prices crumble, data security becomes less important during downsizing when morale is shattered & talent flees. Further, creating alternative revenue streams becomes vital „to save the company“ even if it means selling user data to dangerous dictators.

The other big risk of such tracking is how data can be used by other parties.

Spooks preferred to use the Google cookie to spy on users. And now Google allows personally identifiable web tracking.

Data is being used in all sorts of crazy ways the central ad networks are utterly unaware of. These crazy policies are not limited to other countries. Buying dog food with your credit card can lead to pet licensing fees. Even cheerful „wellness“ programs may come with surprises.

Want to see what the future looks like?

For starters…

About 2 months ago I saw a Facebook post done on behalf of a friend of mine. Gofundme was the plea. Her insurance wouldn’t cover her treatment for a recurring breast cancer and doctors wouldn’t start the treatment unless the full payment was secured in a advance. Really? Really. She was gainfully employed, had a full time, well paying job. But guess what? It wasn’t enough although hundreds of people donated.

This last week she died. She was 38 years old. She died not getting access to a treatment that may or may not have saved her life. She died having to hustle folks for funds to just have a chance to get access to another treatment option and she died while worrying about being financially ruined by her illness. Just horrid.

Is this the society we want? People forced to beg friends on gofundme for help so they can get access to medical treatment? Is this the society we are? Is this truly the best we can do?

Click here to read more.

Source:: seobook.com